Contents

|

|

Blockchain Scalability, a very real problem!

(if you want to help solve the problem, check out our blockchain courses and start experimenting)

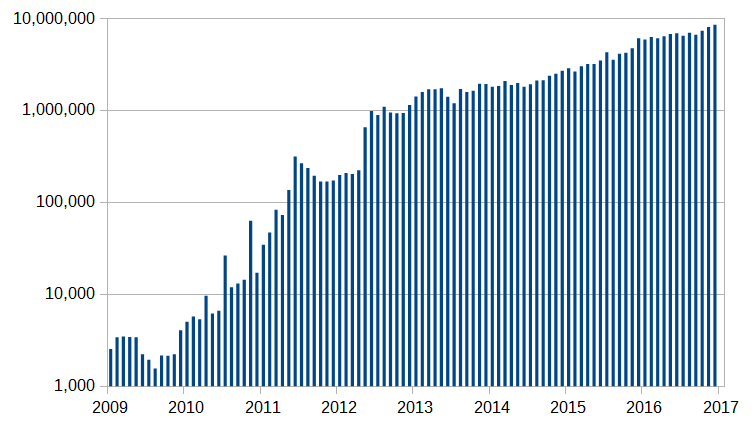

Cryptocurrencies are becoming more and more mainstream. In fact, let’s check out how popular bitcoin and ethereum have gotten over time. This is a graph of the number of daily bitcoin transactions tracked over the years:

Image Courtesy: Wikipedia

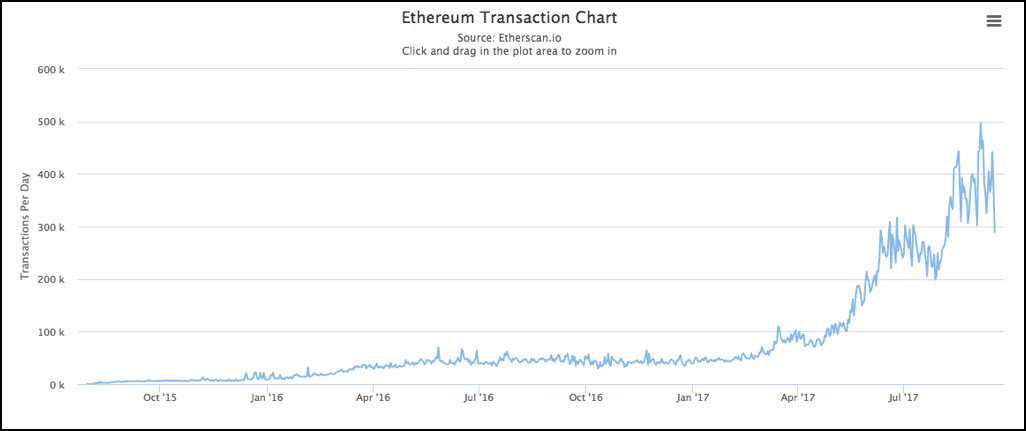

And here we have the number of Ethereum transactions per month over the years:

Image Courtesy: Etherscan

Now, this may look very impressive, but here is the thing, the initial design of cryptocurrencies was not meant for widespread use and adaptation. While it was manageable when the number of transactions was less, as they have gotten more popular a host of issues have come up.

The scalability problem of cryptocurrencies

For bitcoin and ethereum to compete with more mainstream systems like visa and paypal, they need to seriously step up their game when it comes to transaction times. While paypal manages 193 transactions per second and visa manages 1667 transactions per second, ethereum does only 20 transactions per second while bitcoin manages a whopping 7 transactions per second! The only way that these numbers can be improved is if they work on their scalability.

If we were to categorize the main scalability problems in the cryptocurrencies, they would be:

- The time is taken to put a transaction in the block.

- The time is taken to reach a consensus.

The Time Taken To Put A Transaction In The Block

In bitcoin and ethereum, a transaction goes through when a miner puts the transaction data in the blocks that they have mined. So suppose Alice wants to send 4 BTC to Bob, she will send this transaction data to the miners, the miner will then put it in their block and the transaction will be deemed complete.

However, as bitcoin becomes more and more popular, this becomes more time-consuming. Plus, there is also the small matter of transactions fees. You see, when miners mine a block, they become temporary dictators of that block. If you want your transactions to go through, you will have to pay a toll to the miner in charge. This “toll” is called transaction fees.

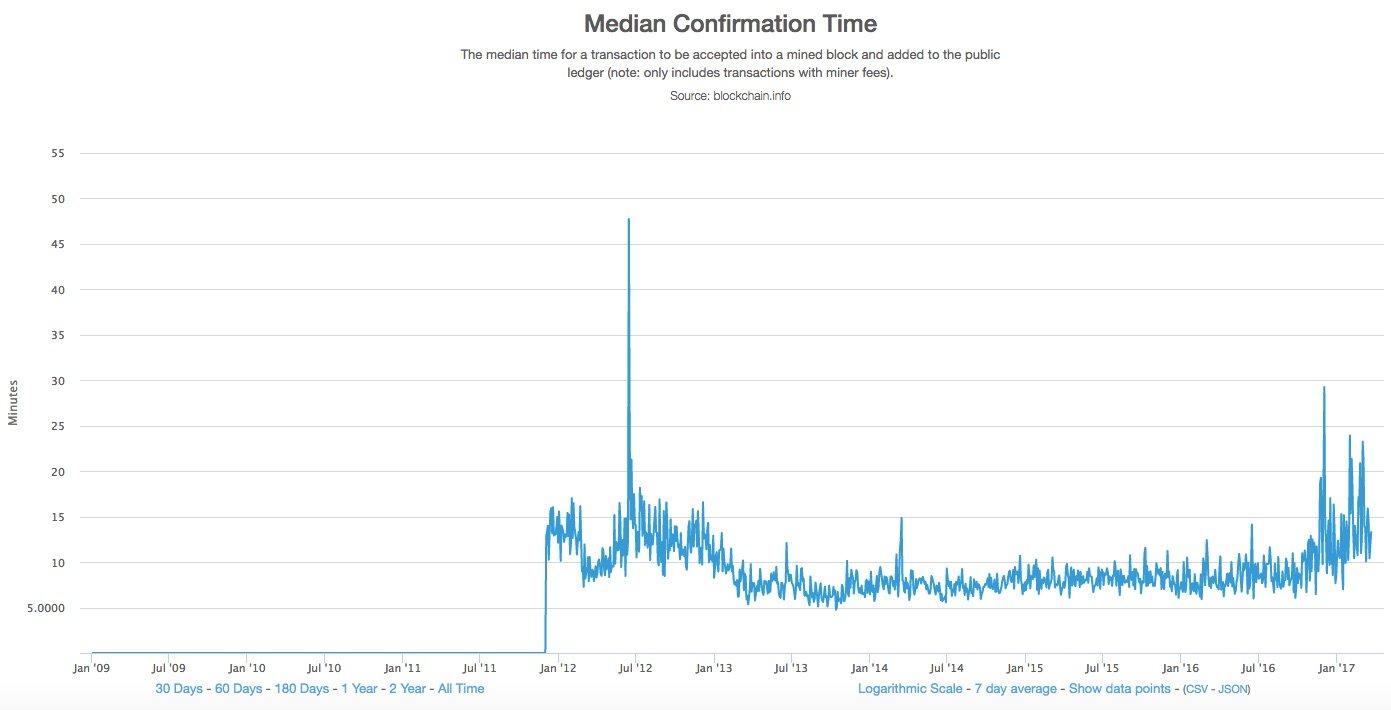

The higher the transaction fees, the faster the miners will put them up in their block. While this is ok for people who have a huge repository of bitcoins, it might not be the most financially viable options. In fact, here is an interesting study for you. This is the amount of time that people had to wait if they paid the lowest possible transaction fee:

If you pay the lowest possible transaction fees, then you will have to wait for a median time of 13 mins for your transaction to go through.

More often than not, the transactions had to wait until a new block was mined (which is 10 mins in bitcoin), because the older blocks would fill up with transactions. Bitcoin has a size limit of 1 mb (this will be expanded on later) which severely limits its transaction carrying capacity.

Ok, so what about ethereum?

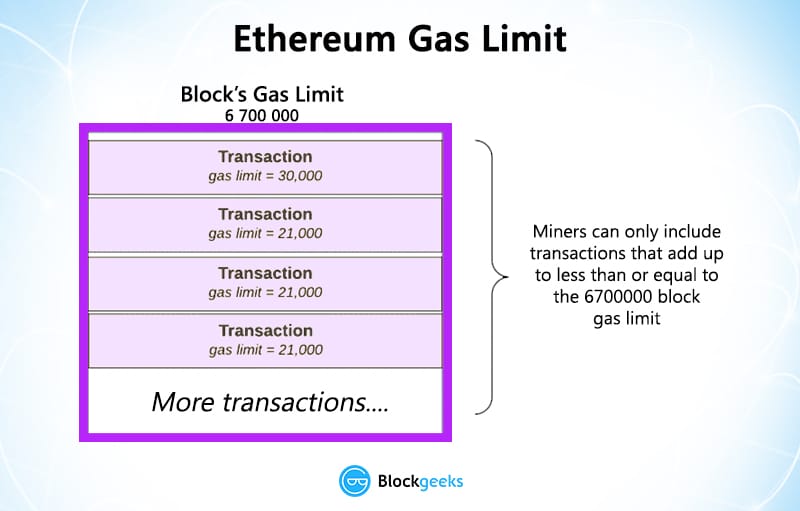

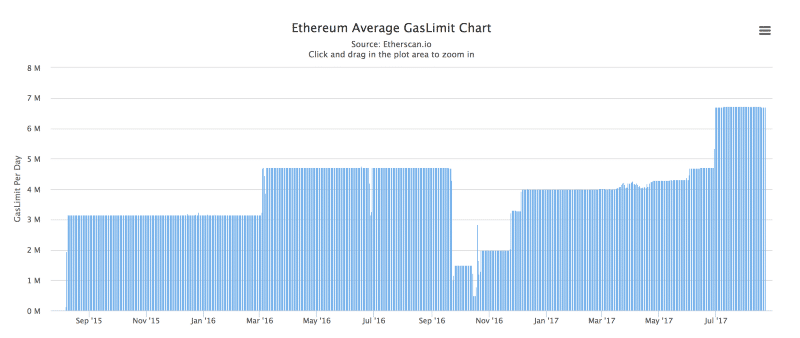

Theoretically speaking, Ethereum is supposed to process 1000 transactions per second. However, in practice, ethereum is limited by 6.7 million gas limit on each block.

To understand what “gas” means, think of this situation. Alice has issued a smart contract for Bob. Bob sees that the elements in the contract will cost X amount of gas. Gas meaning the amount of computational effort on Bob’s part. Accordingly, he will charge Alice for the amount of Gas he used up.

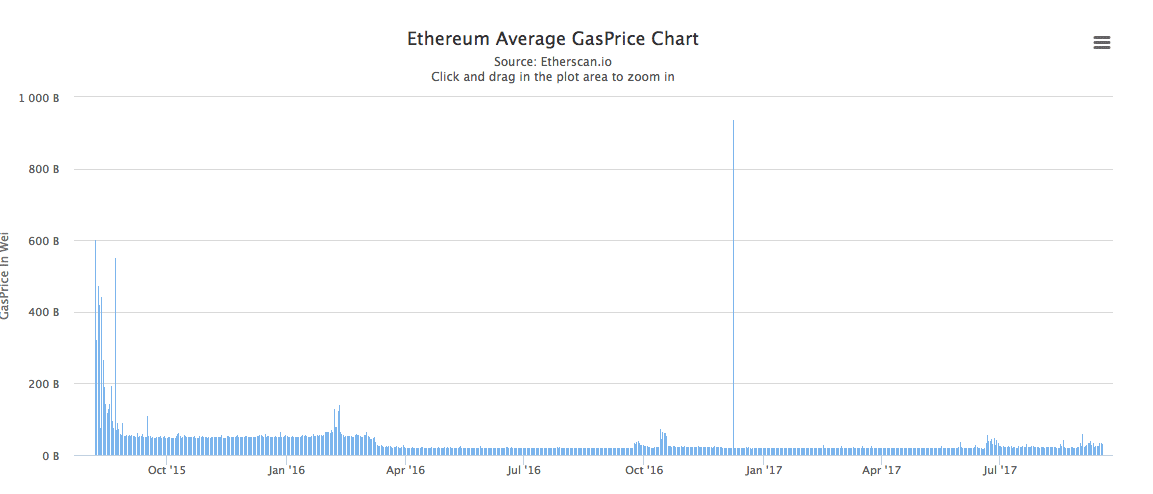

This is what the gas price chart looks like:

Image courtesy: Etherscan.

What does this mean for Blockchain scalability?

Since each block has a gas limit, the miners can only add transactions whose gas requirements add up to something which is equal to or less than the gas limit of the block.

Once again, a number of transactions going through is limited.

The Time Taken To Reach A Consensus

Currently, all blockchain based currencies are structured as a peer-to-peer network. The participants, aka the nodes, are not given any extra special privileges. The idea is to create an egalitarian network. There is no central authority and nor is there any hierarchy. It is a flat topology.

All decentralized cryptocurrencies are structured like this because of a simple reason, to stay true to their philosophy. The idea is to have a currency system, where everyone is treated as an equal and there is no governing body, which can determine the value of the currency based on a whim. This is true for both bitcoin and ethereum.

Now, if there is no central entity, how would everyone in the system get to know that a certain transaction has happened? The network follows the gossip protocol. Think of how gossip spreads. Suppose Alice sent 3 ETH to Bob. The nodes nearest to her will get to know of this, and then they will tell the nodes closest to them, and then they will tell their neighbors, and this will keep on spreading out until everyone knows. Nodes are basically your nosy, annoying relatives.

Remember, the nodes follow a trustless system. What this means is, just because node A says that a transaction is valid doesn’t mean that node B will believe it to be so. Node B will do their own set of calculations to see whether the transaction is actually valid or not. This means, that every node must have their own copy of the blockchain to help them do so. As you can imagine, this makes the whole process very slow.

The problem is, that unlike other pieces of technology, the more the number of nodes increases in a cryptocurrency network, the slower the whole process becomes. Consensus happens in a linear manner, meaning, suppose there are 3 nodes A, B and C.

For consensus to occur, first A would do the calculations and verify and then B will do the same and then C.

However, if there is a new node in the system called “D”, that would add one more node to the consensus system, which will increase the overall time period. As cryptocurrencies has become more popular, the transaction times have gotten slower.

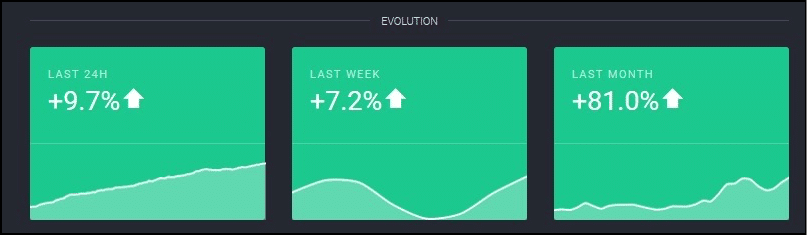

This is especially a problem with ethereum, because it has the most number of nodes among all cryptocurrencies. Thanks to the ICO craze, everyone wants to have a piece of ethereum, which has significantly increased the number of nodes in its network. In fact, as of May 2017, ethereum had 25,000 nodes as compared to Bitcoin’s 7000!! That’s more than 3 times. In fact, the number of nodes from April to May increase by 81%…that’s nearly double!

Image Courtesy: Trust Nodes.

So what are the solutions to the Blockchain scalability issues?

Both ethereum and Bitcoins have come up with a host of solutions which have either already been or are going to be implemented. Let’s go through some of the major ones.

The ones that we will be covering are:

- Segwit.

- Block Size Increase.

- Sharding.

- Proof Of Stake.

- Off Chain State Channels.

- Plasma

Segwit (Exclusive only to bitcoin)

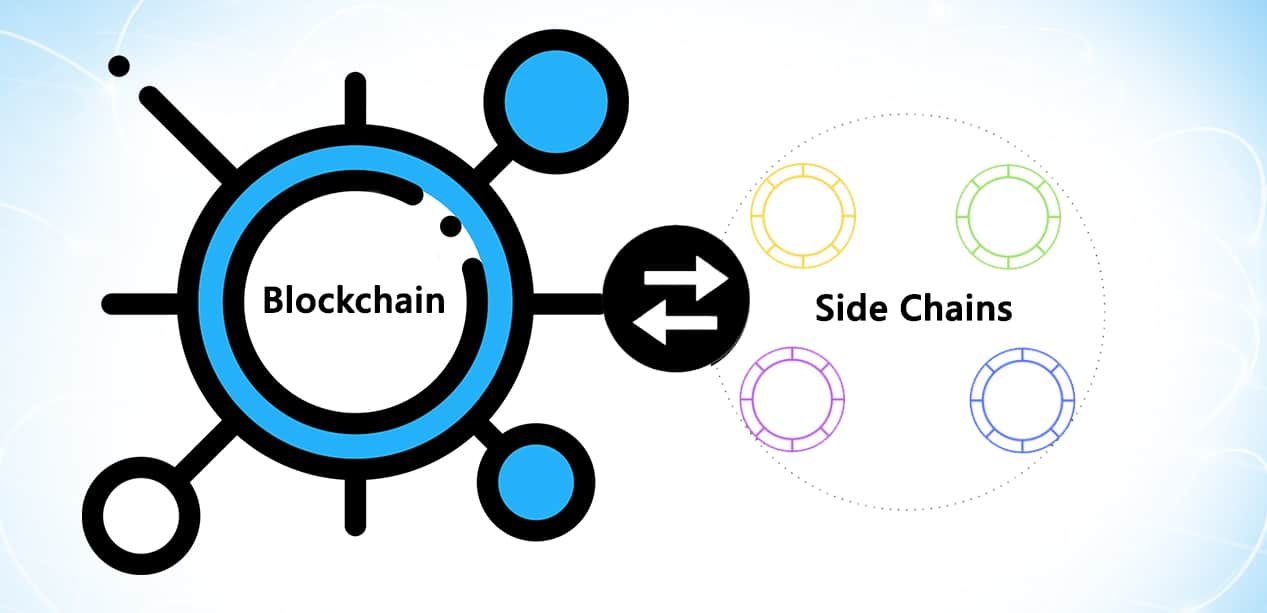

Blockstream’s Dr Peter Wiulle envisioned Segwit as one of the features of the sidechain which will run parallel to the main bitcoin blockchain.

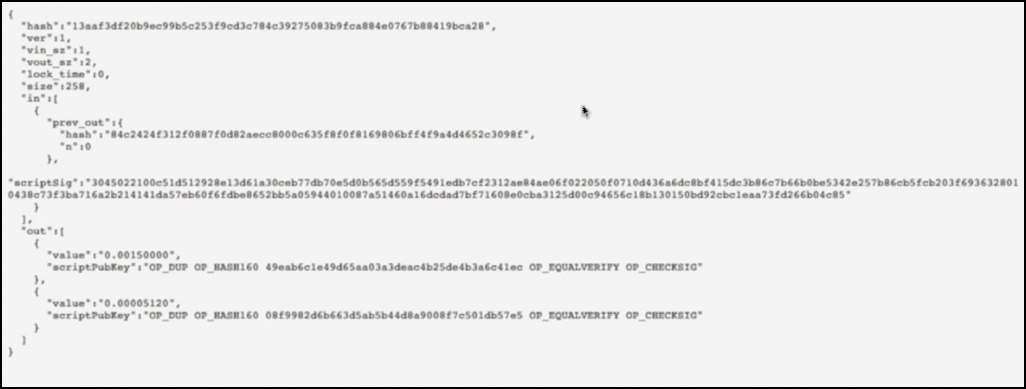

Activating Segwit aka Segregated Witness would mean that all the signature data of each and every transaction will move from the main chain to the side chain. What do we mean by signature data? Let’s look at the behind the scenes data of a transaction:

The transaction details code

This is what the transaction looks like in the code form. Suppose Alice wants to send 0.0015 BTC to Bob and in order to do so, she sends inputs which are worth 0.0015770 BTC. This is what the transaction detail looks like:

Image courtesy: djp3 youtube channel.

The first thing that you see:

Is the name of the Transaction aka the hash of the input and output value.

- Vin_sz is the number of input data, since Alice is sending the data using only one of her previous transactions, it is 1.

- Vout_sz is 2 because the only outputs are Bob and the change.

This is the Input data:

See the input data? Alice is only using one input transaction as vin_sz is 1. The input data is 0.0015770 BTC.

Below the input data is her signature data (Remember this for the next section)

Underneath all this is the output data:

- The first part of the data signifies that Bob is getting 0.0015 BTC.

- The second part signifies that 0.00005120 BTC is what Alice is getting back as change.

- Now, remember that our input data was 0.0015770 BTC? This is greater than (0.0015 + 0.00005120). The deficit of these two values is the transaction fee that the miners are collecting.

This is the anatomy of a simple transaction.

So what will happen on activating Segwit?

The problem with this signature data is that it is very bulky. In fact, 65% of the data taken up by the transaction is because of the signature. And this data is useful only for the initial verification process, it is not needed later on at all.

So what will happen on activating Segwit?

The signature data will move on from the main chain to the extended block in the parallel chain:

What this will do is that it’ll free up a lot of space in the block itself for more transactions.

It was envisioned that the signature data would be arranged in the form of a Merkle tree in the side chain. The Merkle root of the transactions was placed in the block along with the coinbase transaction (the first transaction in each block which basically signifies the block reward). However, on doing this, the developers stumbled upon something unexpected. They discovered that on putting the merkle root in that particular place they somehow increased the overall block size limit WITHOUT increasing the block size limit!

As of August 24, 2017 segwit was activated on bitcoin. Let’s see what Segwit had to say about that:

Image courtesy: segwit.co

Pros and Cons of Segwit

Pros of segwit:

- Increases a number of transactions that a block can take.

- Decreases transaction fees.

- Reduces the size of each individual transaction.

- Transactions can now be confirmed faster because the waiting time will decrease.

- Helps in the scalability of bitcoin.

- Since the number of transactions in each block will increase, it may increase the total overall fees that a miner may collect.

- Removes transaction malleability and aids in the activation of lightning protocol (more on this later)

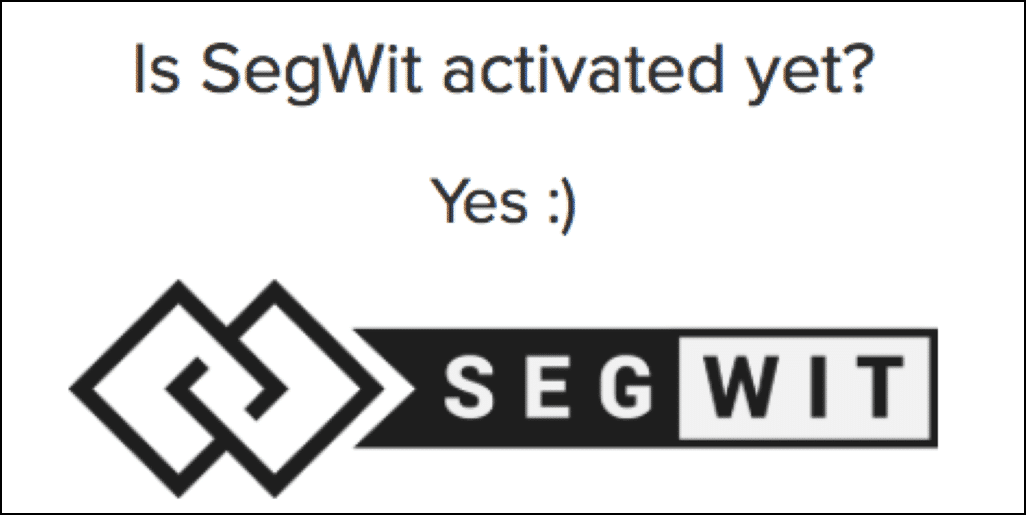

- Removes the quadratic hashing problem: Quadratic hashing is an issue that comes along with block size increase. The problem is that in certain transactions, signature hashing scales quadratically

Image courtesy: Bitcoincore.org

Basically, doubling the amount of transactions in a block will double a number of transactions and that in turn will double the amount of signature data that will be inside each of those transactions. This would make the transactions even more bulky and increase the transaction time by a huge amount. This opens the gates for malicious parties who may want to spam the blockchain.

Segwit resolves this by changing the calculation of the signature hash and make the whole process more efficient as a result.

Cons of segwit:

- Miners will now get lesser transaction fees for each individual transaction.

- The implementation is complex and all the wallets will need to implement segwit themselves. There is a big chance that they may not get it right the first time.

- It will significantly increase the usage of resources since the capacity, transactions, bandwidth everything will increase.

- As the creation of Bitcoin Cash shows, it did ultimately split up the Bitcoin Core community.

- Another problem with Segwit is the maintenance. The sidechain containing the signature data will need to be maintained by miners as well. However, unlike the main blockchain, the miners have no financial benefits on doing so, it will need to be done pro-bono or some reward scheme needs to be thought of to incentivize the miners.

Block size Increase

Now, since the main problem of bitcoin and ethereum has been the limited blocksize, why don’t we just increase them? Bitcoin wasn’t supposed to have a 1 MB limit but then Satoshi was forced to put it because they didn’t want Bitcoin to be bogged down by spam transactions.

While this might sound like a good idea in practice, the implementation of this has been anything but. In fact, this has given birth to a lot of debate in the Bitcoin community with sides passionately arguing both for and against the block size increase. Let’s checkout some of these arguments:

Arguments against block size increase

- Miners will lose incentive because transaction fees will decrease: Since the block sizes will increase transactions will be easily inserted, which will significantly lower the transaction fees. There are fears that this may deincentivize the miners and they may move on to greener pastures. If the number of miners decrease then this will decrease the overall hashrate of bitcoin.

- Bitcoins shouldn’t be used for everyday purposes: Some members of the community don’t want bitcoin to be used for regular everyday transactions. These people feel that bitcoins have a higher purpose than just being regular everyday currency.

- It will split the community: A block size increase will inevitably cause a fork in the system which will make two parallel bitcoins and hence split the community in the process. This may destroy the harmony in the community.

- It will cause increased centralization: Since the network size will increase, the amount of processing power required to mine will increase as well. This will take out all the small mining pools and give mining powers exclusively to the large scale pools. This will in turn increase centralization which goes against the very essence of bitcoins.

Arguments for the block size increase

- Block size increase actually works to the miner’s benefit: Increased block size will mean increase transactions per block which will in turn increase the amount of transaction fees that a miner may make from mining a block.

- Bitcoin needs to grow more and be more accessible for the “common man”. If the block size doesn’t change then there is a very real possibility that the transactions fees will go higher and higher. When that happens, the common man will never be able to use it and it will be used exclusively only by the rich and big corporations. That has never been the purpose of bitcoin.

- The changes won’t happen all at once, they will gradually happen over time. The biggest fear that people have when it comes to the block size change is that too many things are going to be affected at the same time and that will cause major disruption. However, people who are “pro block size increase” think that that’s an unfounded fear as most of the changes will be dealt with over a period of time.

- There is a lot of support for block size increase already and people who don’t get with the times may get left behind.

- Segwit is not a permanent fix.

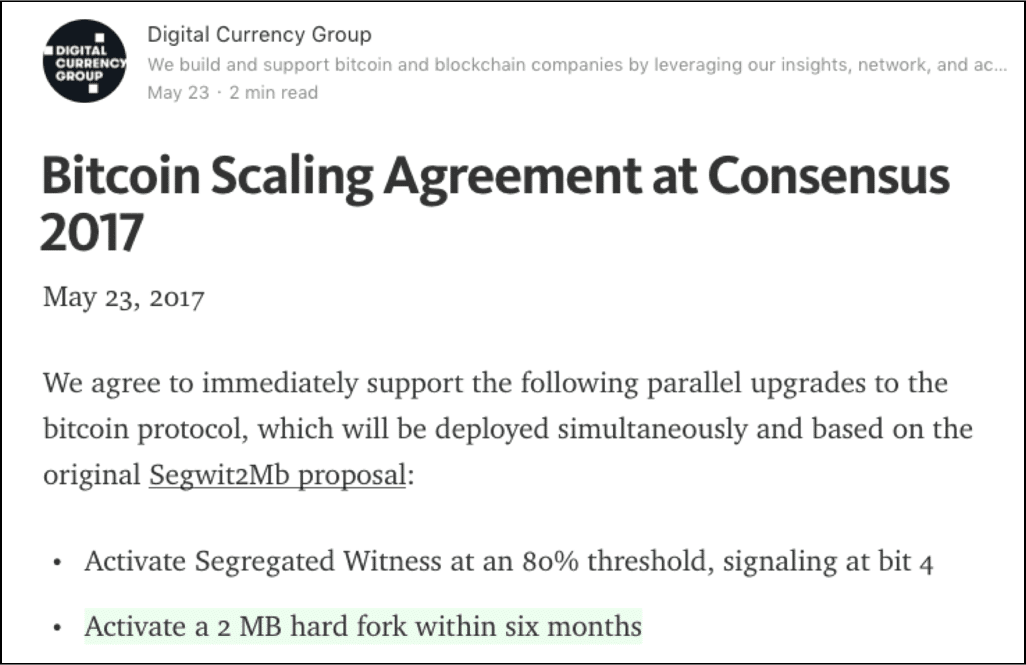

Anyway, on May 21 2017, the New York Agreement took place where it was decided that Segwit will be activated and the block sizes will increase to 2 mb.

Image courtesy: DCG article in Medium.

People who were not happy with the idea of Segwit activating forked away from the main chain and made Bitcoin Cash which has a block size limit of 8 mb.

A block size increase was also suggested for ethereum but because of a lot of reasons people are not really keen on doing that in Ethereum as of writing:

- Firstly, the main thing that is hindering Ethereum’s scalability is the speed of consensus among nodes. Increasing the block size will still not solve this problem. In fact, as the number of transactions per block increases, the number of calculations and verifications per node will increase as well.

- In order to accommodate for more and more transactions, the block sizes need to be increased periodically. This will centralize the system more because normal computers and users won’t be able to download and preserve such bulky blockchains. This goes against the egalitarian spirit of a blockchain.

- Finally, block size increase will happen only via hardfork, which can split the community. The last time a major hardfork happened in ethereum the entire community was divided and two separate currencies came about. People don’t really want this to happen again.

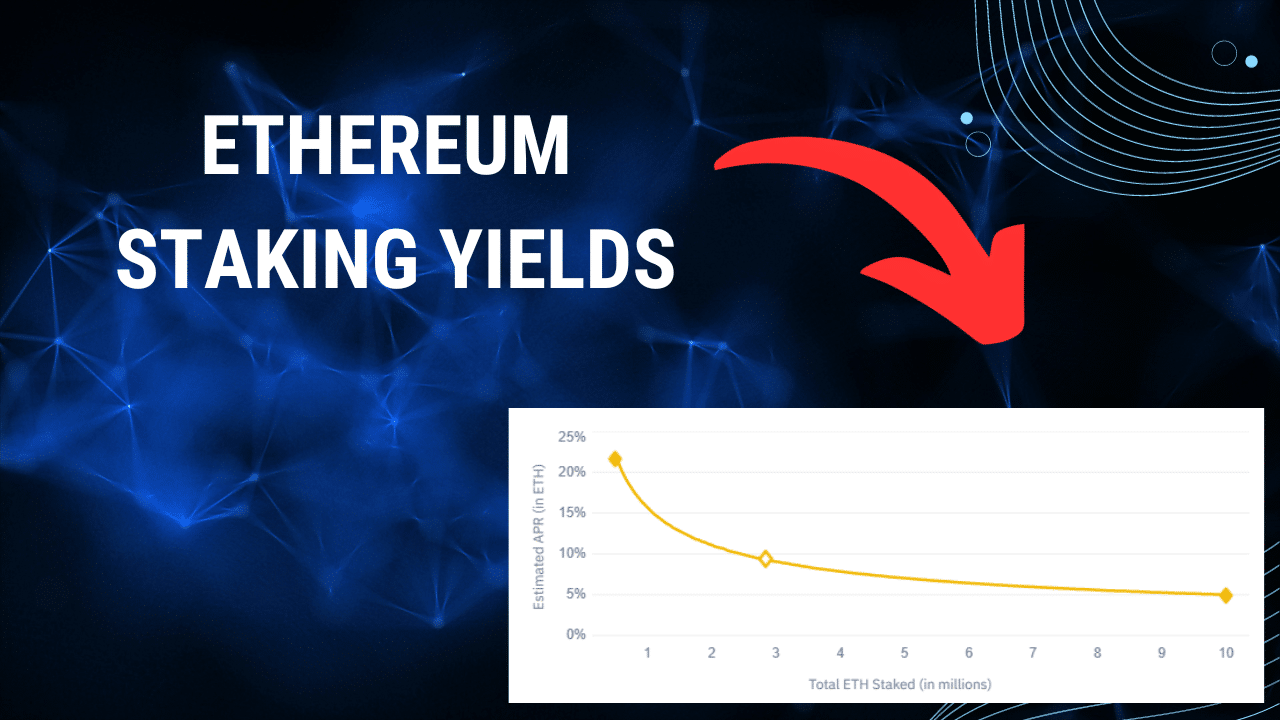

Proof Of Stake

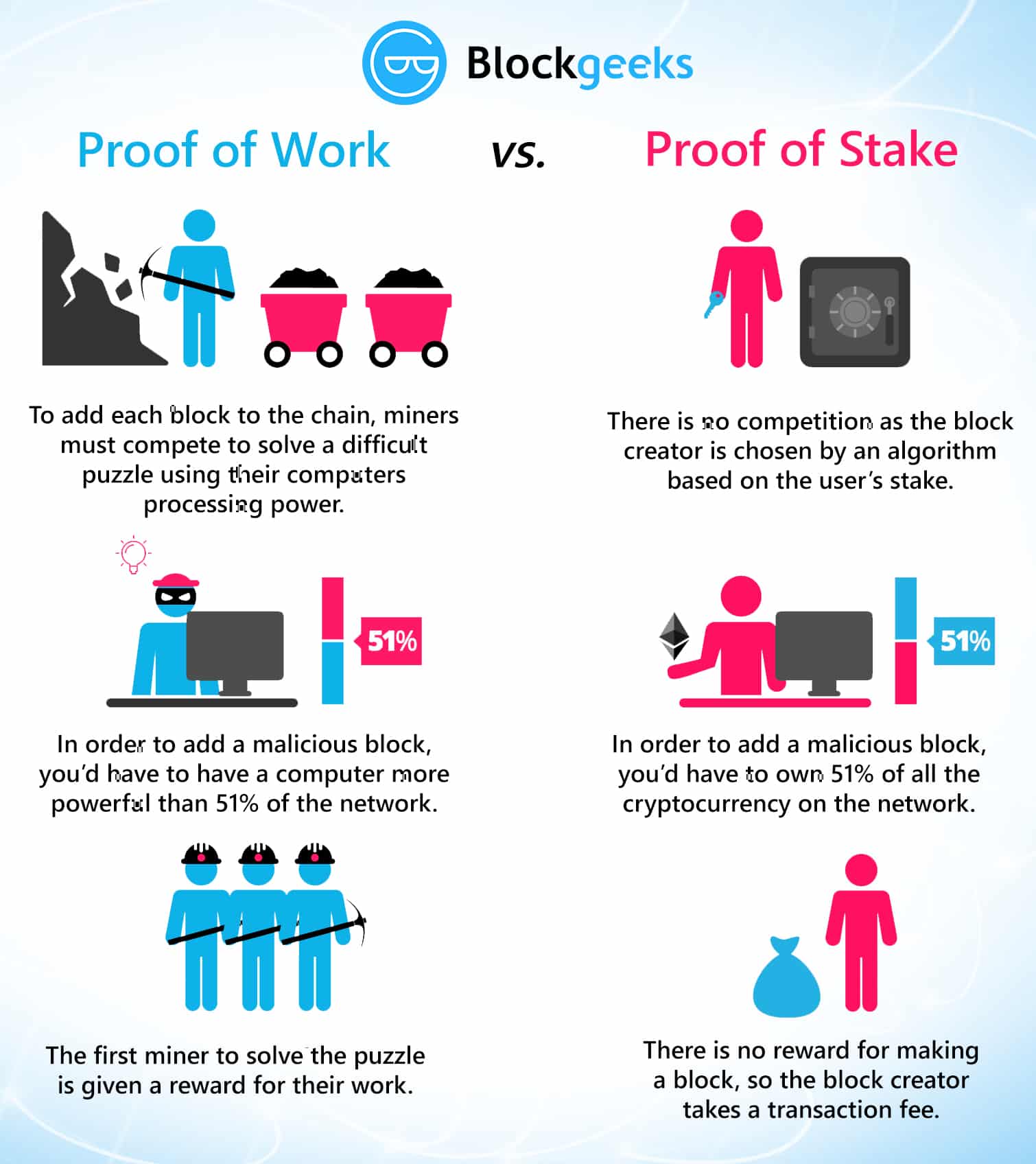

The change from proof of work to proof of stake

One of biggest things happening right now is Ethereum’s shift from proof of work to proof of stake.

- Proof of work: This is the protocol that most cryptocurrencies like ethereum and Bitcoin have been following so far. This means that miners “mine” cryptocurrencies by solving crypto-puzzles using dedicated hardware.

- Proof of stake: This protocol will make the entire mining process virtual. In this system we have validators instead of miners. The way it works is that as a validator, you will first have to lock up some of your ether as stake. After doing that you will then start validating blocks which basically means that if you see any blocks that you think can be appended to the blockchain, you can validate it by placing a bet on it. When and if, the block gets appended, you will get a reward proportional to the stake you have invested. If, however, you bet on the wrong or the malicious block, the stake that you have invested will be taken away from you.

To implement “proof of stake” ethereum is going to use the Casper consensus algorithm. In the beginning it is going to be a hybrid style system where majority of the transactions will still be done the proof of work style while every 100th transaction is going to be proof of stake. What this will do is that it will provide a real world test for proof of stake on Ethereum’s platform. But what does that mean for ethereum and what are the advantages of this protocol? Let’s take a look.

Advantages of proof of stake

- Lowers the overall energy and monetary cost: The world’s bitcoin miners spend around $50,000 per hour on electricity. That’s $1.2 million per day, $36 million per month and ~$450 million per year! Just put your head around those numbers and the amount of power being wasted. By using “Proof-of-stake” you are the making the whole process completely virtual and cutting off all these costs.

- No ASIC advantage: Since the whole process will be virtual, it wouldn’t depend on who has the better equipment or ASICs (application-specific integrated circuit).

- Makes 51% attack harder: 51% attack happens when a group of miners gain more than 50% of the world’s hashing power. Using proof of stake negates this attack.

- Malicious-free validators: Any validator who has their funds locked up in the blockchain would make sure that they are not adding any wrong or malicious blocks to the chain, because that would mean their entire stake invested would be taken away from them.

- Block creation: Makes the creation of newer blocks and the entire process faster. (More on this in the next section).

- Scalability: Makes the blockchain scalable by introducing the concept of “sharding” (More on this later.)

How does this help in Blockchain scalability?

Introducing proof-of-stake is going to make the blockchain a lot faster because it is much more simple to check who has the most stake then to see who has the most hashing power. This makes coming to a consensus much more simple. Plus, implementing a “proof of stake blockchain” is an integral part of Serenity, the 4th and final form of ethereum (more on this in a bit.)

At the same time proof-of-stake makes the implementation of sharding easier. In a proof-of-work system it will be easier for an attacker to attack individual shards which may not have high hashrate.

Also, in POS miners won’t be getting a block fee, and they can only earn via transaction fees. This incentivizes them to increase the block size to get in more transactions (via gas management).

What is the future of proof of stake?

As of right now, Casper stage one is going to be implemented on the blockchain, wherein every 100th block will be checked via proof-of-stake. Yoichi Hirai from ethereum foundations has been running casper scripts through mathematical bug detectors to make sure that it is completely bug free.

Eventually, the plan is to move majority of the block creation through proof-of-stake and the way they are planning to do that is….by entering the ice age. The “ice age” is a difficulty time bomb which is going to make mining exponentially more difficult. Having an impossibly high difficulty will greatly reduce the hash rate which in turn will reduce the speed of the entire blockchain and the DAPPS running on it. This will force everyone involved in ethereum to move on to proof-of-stake.

However, this entire transition is not without its obstacles. One of the biggest fears that people have is that miners may forced a hardfork in the chain at a point before the ice age begins and then continue mining in that chain. This could be potentially disastrous because that would mean there could be 3 different chains of ethereum running at the same time: Ethereum classic, ethereum proof of work and Ethereum proof of stake.

This is currently all speculation. For now, the fact is that, for a scalable model, it is critical for ethereum to use proof of stake to get the speed and the flexibility it requires.

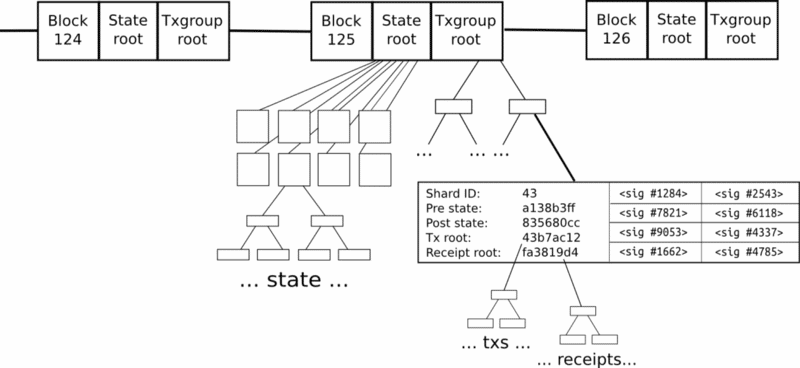

Sharding

The biggest problem that ethereum is facing is the speed of transaction verification. Each and every full node in the network has to download and save the entire blockchain. What sharding does is that it breaks down a transaction into shards and spreads it among the network. The nodes work on individual shards side-by-side. This in turn decreases the overall time taken.

Imagine that ethereum has been split into thousands of islands. Each island can do its own thing. Each of the island has its own unique features and everyone belonging on that island i.e. the accounts, can interact with each other AND they can freely indulge in all its features. If they want to contact with other islands, they will have to use some sort of protocol.

So, the question is, how is that going to change the blockchain?

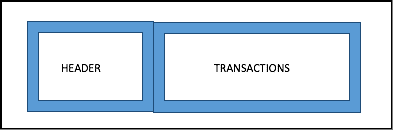

What does a normal block in bitcoin or ethereum (pre-sharding) look like?

So, there is a block header and the body which contains all the transactions in the block. The Merkle root of all the transactions will be in the block header.

Now, think about this.

- Did bitcoin really need blocks?

- Did it really need a block chain??

- Satoshi could have simply made a chain of transactions by including the hash of the previous transaction in the newer transaction, making a “transaction chain” so to speak.

The reason why they arrange these transactions in a block is to create one level of interaction and make the whole process more scalable. What ethereum suggests is that they change this into two levels of interaction.

The First Level

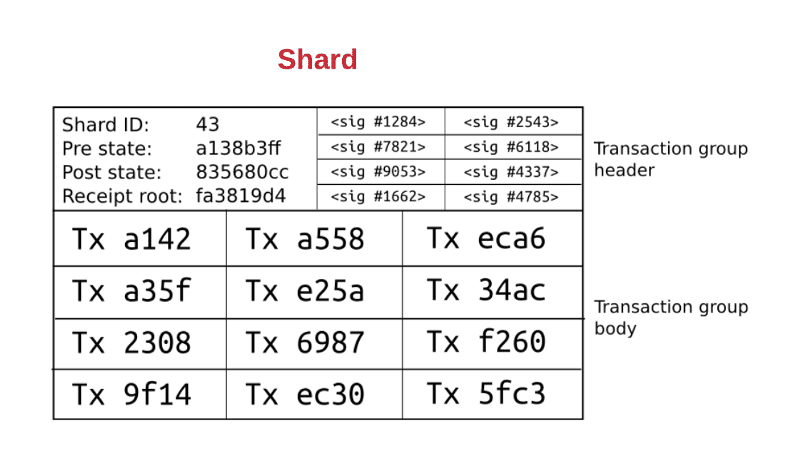

The first level is the transaction group. Each shard has its own group of transaction.

Image courtesy: Hackernoon

The transaction group is divided into the transaction group header and the transaction group body.

Transaction Group Header

- The header is divided into distinct left and right parts.

The Left Part:

- Shard ID: The ID of the shard that the transaction group belongs to.

- Pre state root: This the state of the root of shard 43 before the transactions were applied.

- Post state root: This is the state of the root of shard 43 after the transactions are applied.

- Receipt root: The receipt root after all the transactions in shard 43 are applied.

The Right Part:

- The right part is full of random validators who need to verify the transactions in the shard itself. They are all randomly chosen.

Transaction Group Body

- It has all the transaction IDs in the shard itself.

Properties of Level One

- Every transaction specifies the ID of the shard it belongs to.

- A transaction belonging to a particular shard shows that it has occurred between two accounts which are native to that particular shard.

- Transaction group has transactions which belong to only that shard ID and are unique to it.

- Specifies the pre and post state root.

Now, let’s look at the top level aka the second level.

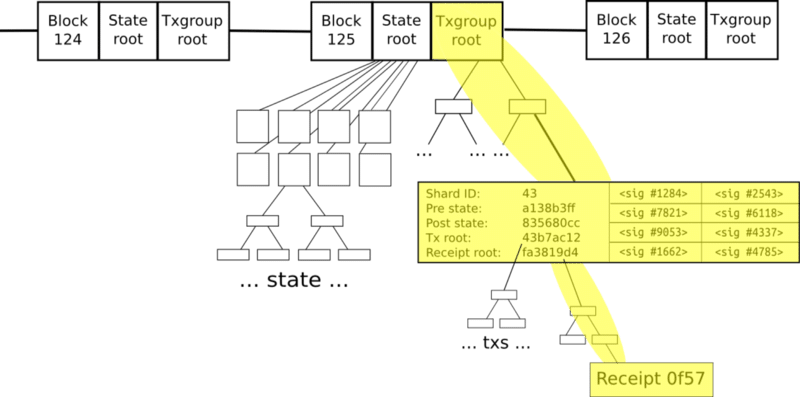

The Second Level

Image courtesy: Hackernoon.

No, don’t be scared! It is easier to understand than it looks.

There is the normal block chain, but now it contains two primary roots:

- The state root

- The transaction group root

The state root represents the entire state, and as we have seen before, the state is broken down into shards, which contain their own substates.

The transaction group root contains all the transaction groups inside that particular block.

Properties Of Level Two

- Level two is like a simple blockchain, which accepts transaction groups rather than transactions.

- Transaction group is valid only if:a) Pre state root matches the shard root in the global state.

b) The signatures in the transaction group are all validated. - If the transaction group gets in, then the global state root becomes the post-state root of that particular shard ID.

So how does cross-shard communication happen?

Now, remember our island analogy?

The shards are basically like islands. So how do these islands communicate with each other? Remember, the purpose of shards is to make lots of parallel transactions happen at the same time to increase performance. If ethereum allows random cross shard communication, then that defeats the entire purpose of sharding.

So what protocol needs to be followed for cross-shard communication?

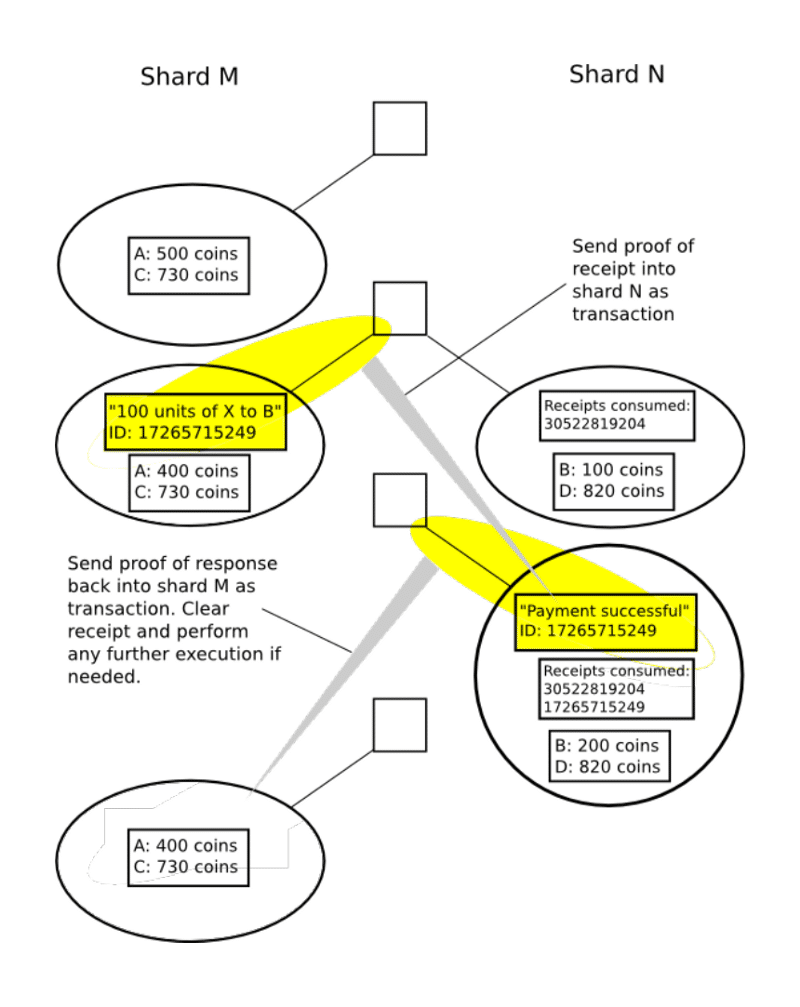

ethereum chose to follow the receipt paradigm for cross-shard communications. Check this out:

Image courtesy: hackernoon

As you can see here, each individual receipt of any transaction can be easily accessed via multiple Merkle trees from the transaction group Merkle root. Every transaction in a shard will do two things:

- Change the state of the shard it belongs to

- Generate a receipt

Here is another interesting piece of information. The receipts are stored in a distributed shared memory, which can be seen by other shards but not modified. Hence, the cross-shard communication can happen via the receipts like this:

Image courtesy: Hackernoon

What are the challenges of implementing sharding?

- There needs to be a mechanism to know which node implements which shard. This needs to be done in a secure and efficient way to ensure parallelization and security.

- Proof of stake needs to be implemented first to make sharding easier according to Vlad Zamfir. In a proof-of-work system it will be easier to attack shards with lesser hashrate.

- The nodes work on a trustless system, meaning node A doesn’t trust node B and they should both come to a consensus regardless of that trust. So, if one particular transaction is broken up into shards and distributed to node A and node B, node A will have to come up with some sort of proof mechanism that they have finished work on their part of the shard.

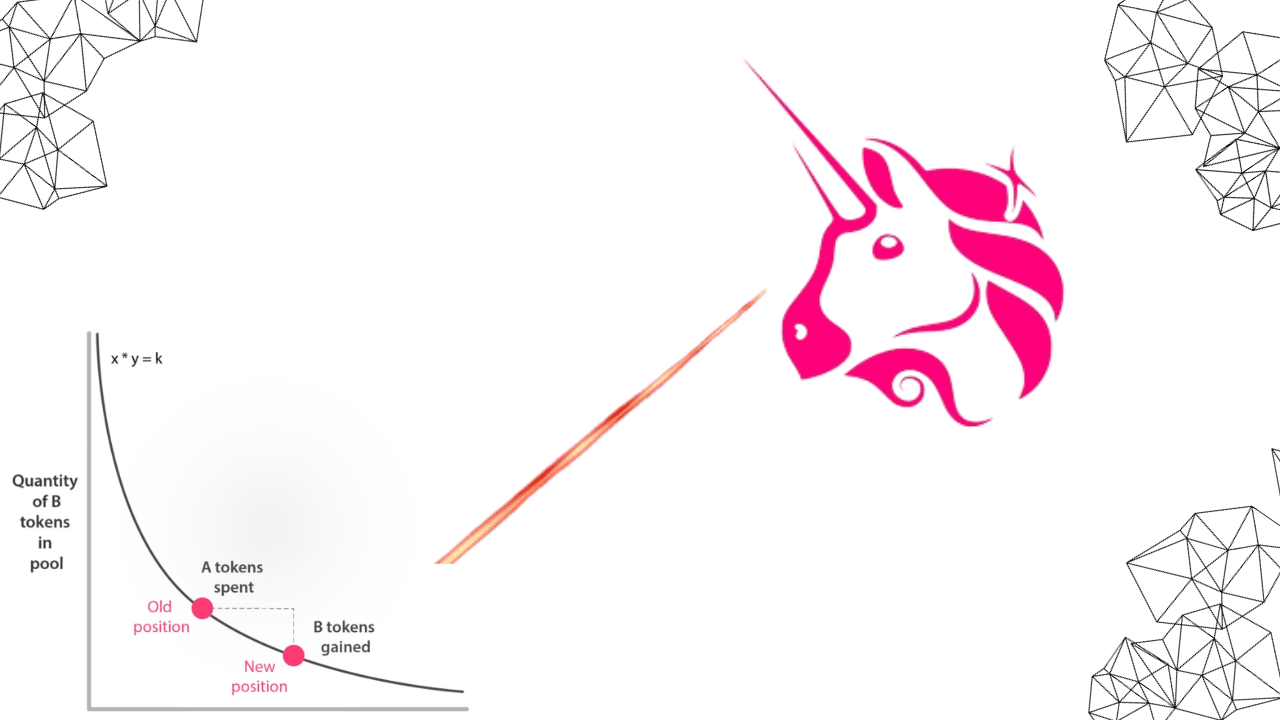

Off-Chain State Channels

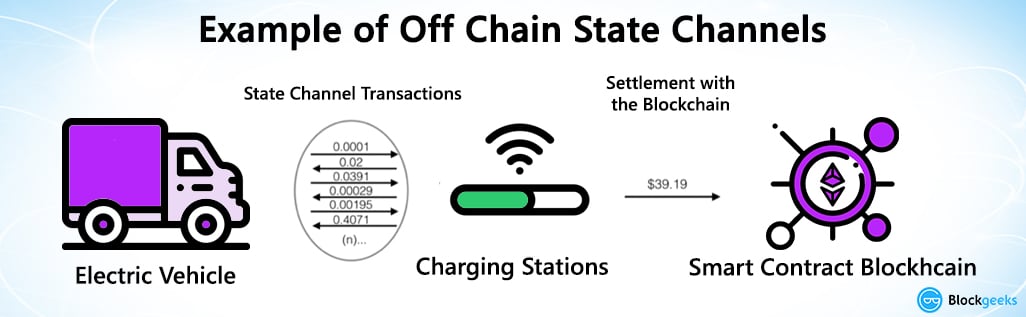

What is a state channel?

A state channel is a two-way communication channel between participants which enable them to conduct interactions, which would normally occur on the blockchain, off the blockchain. What this will do is that it will decrease transaction time exponentially since you are no longer dependent on a third party like a miner to valid your transaction.

So what are the requirements to do an off-chain state channel?

- A segment of the blockchain state is locked via multi-signature or some sort of smart contract, which is agreed upon by a set of participants.

- The participants interact with each other by signing transactions among each other without letting submitting anything to the miners.

- The entire transaction set is then added to the blockchain.

The state channels can be closed at a point which is predetermined by the participants according to Slock.it founder Stephan Thual. It could either be:

- Time lapsed eg. the participants can agree to open a state channel and close it after 2 hours.

- It could be based on the total amount of transactions done eg. close the chain after $100 worth of transactions have taken place.

Image Courtesy: Stephan Tual Medium Article

So, in the image above. We have a car which directly interacts with the charger and does a total of $39.19 worth of transactions. Finally, after a series of interactions the entire transaction chunk is added to the blockchain. Imagine how much time it would have taken if they had to run every single transaction through the blockchain!

The off-chain state channel that bitcoin is looking to implement is the lightning network.

What is the lightning network?

The lightning netwok is an off-chain micropayment system which is deigned to make transactions work faster in the blockchain. It was conceptualized by Joseph Poon and Tadge Dryja in their white paper which aimed to solve the block size limit and the transaction delay issues. It operates on top of Bitcoin and is often referred to as “Layer 2”. As Jimmy Song notes in his medium article:

“The Lightning Network works by creating a double-signed transaction. That is, we have a new check that requires both parties to sign for it to be valid. The check specifies how much is being sent from one party to another. As new micro-payments are made from one party to the other, the amount on the check is changed and both parties sign the result.”

The network will enable Alice and Bob to transact with each other without the being held captive by a third part aka the miner. In order to activate this, the transaction needs to be signed off by both Alice and Bob before it is broadcasted into the network. This double signing is critical in order for the transaction to go through.

However, here is where we face another problem.

Since the double check relies heavily upon the transaction identifier, if for some reason the identifier is changed, this will cause an error in the system and the Lightning Network will not activate. In case, you are wondering what the transaction identifier is, it is the transaction name aka the hash of the input and output transactions.

This is the transaction identifier.

A bug called “Transaction Malleability” can cause the transaction identifier to change. However, this will not be a problem anymore, because Segwit activation removes this bug.

ethereum is also planning to activate something like the lightning network which is called “Raiden”.

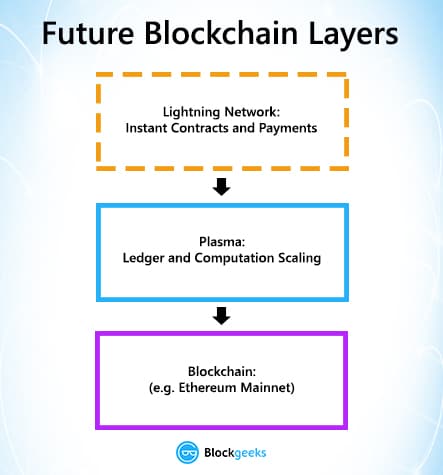

Plasma

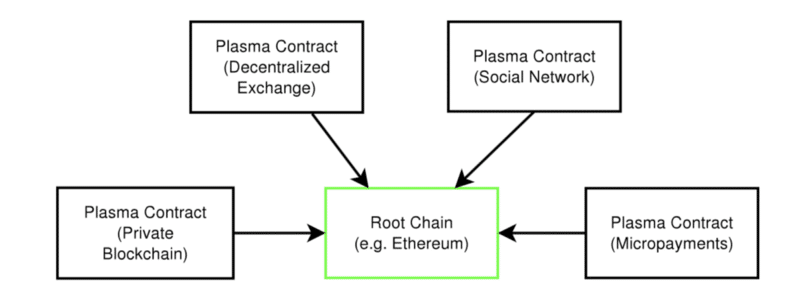

Plasma along with lightning network/Raiden will introduce a whole new layer to the ethereum architecture:

Image courtesy: Medium.

Plasma is a series of contracts that run on top of the root chain (the main Ethereum blockchain). If one were to envision the architecture and the structure, then think of the main blockchain and the plasma blockchains as a tree. The main blockchain is the root while the plasma chain aka child blockchains are the branches.

This greatly reduces the load on the main chain. Periodically the branches keep sending reports to the main chain. In fact, you can view the Root Chain as the supreme court and all the branches as the subordinate courts which derives its powers from the main court.

All the branch chains can issue their own unique tokens which can incentivize chain-validators to take care of the chains and to ensure that it is fault-free. Each branch has its own independent data and when it does need to submit some data to the main chain, it doesn’t dump all its contents, it just submits the blog header hash to the main chain.

Not only does plasma save up a lot of space in the main chain, it also increases the transaction process speed exponentially. If implemented properly, this could be one of the most revolutionary changes ever made to ethereum and cryptocurrency in general.

Looking Ahead – Blockchain Scalability

Cryptocurrency, and especially, bitcoin and ethereum are becoming more and more mainstream. In order to keep pace with the increased usage, they need to seriously step it up when it comes to scalability. Fortunately, there are some fascinating solutions which could give them some very interesting results. Can they truly solve the scalability issue though? That remains to be seen.

I am not even close to you all in your expertise, but, this last statement of “…well established theorem that security of the blockchain protocol is inversely related to the product of the block size and block creation rate.” makes no sense to me. Security is established by the immutability of the blockchain itself. If by “secure” you mean the 51% attack probability, that is not dependent on the block size and block creation rate, but, dependent on the computational capability of an entity to out-compute all the other full or mining nodes on the network. That is dependent on number of nodes N that has absolutely nothing to do with the block size and block creation rate. So, I am puzzled by this last statement.

DanielSzego Am I sure about these questions specifically regarding IOTA? No, of course not. I think that the marchov chain monte carlo algorithm for tip selection they have proposed could be just as secure as PoW, PoS or Casper, but they have not proved this formally or even empirically, so who knows. In fact, the specific MCMC algorithm is not even yet set in stone, neither in its general form nor in its parameters I do not think. They currently have a closed-source coordinator approving checkpoints as they experiment with their consensus mechanism (which essentially hinges on the MCMC algorithm, and whether this MCMC algorithm would be every node’s optimal strategy for tip selection, since it can’t be enforced by the protocol). The SPECTRE paper claims to have rigorously and formally proved that their protocol is just as secure as PoW, and infinitely more scalable. I have not gone through every proof, nor would I be qualified to do so as I do not have a doctorate in computational science or mathematics, but they do. Of course even doctorates make mistakes. My general belief is that blockchain has a decentralized, scalability, security (DSS) trilemma. Read more about that here (note he calls security consistency, but I think it is better described as security … whatever): https://blog.bigchaindb.com/the-dcs-triangle-5ce0e9e0f1dc. An improvement in one of these three necessarily comes with a cost of one or both of the others. This cannot be solved with any of the tweaks or second layer solutions mentioned in this article, however there may be an ‘optimal’ level of DSS which one of these solutions comes closer to. Note that ‘optimal’ is also likely subjective, people value each property of DSS differently, and this may also be specific application dependent. This is why I think the DAG offers a… Read more »

In my opinion and from my understanding, the problem is inherent to the distributed nature of cryptocurrencies because thousands of computers have to agree on transactions before they are posted. The only way to solve this is to make a crypto currency require less verification and that opens things up to fraud. Or improve ping for all the computers on the block chain, and that can’t really be controlled. There really isn’t a solution to this right now.

Also, The number of transactions should not cause any increases in processing times. The transactions can and are processed in batches so as long as the number of computers increase proportionally to the number of transactions, which they should processing time will be the same.

And if you think in terms of processing times credit/debit cards are the worst. Transactions stay pending for a day at least, and aren’t actually processed until then. The problem is the cards are centrally controlled so they can make guarantees the transaction will definitely be processed and if it isn’t then the bank/card company handle the discrepancies. So technically the processing times are “fakedâ€. With crypto since there is no central authority this can’t be done. So the transaction time for crypto is actual transaction time, if the transaction is complete, then it really is complete.

Technically the only faster way to truly transfer money is paypal(Or similar services) and it is very close to instant, but only as long as the money is in your wallet, if it isn’t it’s limited by your bank which again takes at least a day.

The fundamental problem is the blockchain itself. A Directed Acyclic Graph (DAG) is a better model. See IOTA (already released, but protocol still undergoing changes) or SPECTRE (still in development). https://iota.org/IOTA_Whitepaper.pdf

https://eprint.iacr.org/2016/1159.pdf

as far as I know IOTA is mainly designed for internet of things use cases, meaning that the design goal was a high transaction speed, but perhaps less general security. Are you sure that DAG design of transaction confirmation provide the same general system security as for instance proof of work, proof of stake or casper protocols ? Or the same security in the sense of solving the general byzantine problem ?

Totally agree with you. Check out Yobicash, also based on DAG:

https://yobicash.org/whitepaper.pdf